On this page:

- The series

- Summary

- Introduction to passive security

- 1. On-time security patching

- 2. Keep your system up to date

- 3. Log monitoring and emails

- 4. Moving security into LAMP stack or above

- 5. Read-only file system and CI

- Manual management — a burden with passive security

- Overview of victim profiling — how I would do it

- Patch window risks

- Conclusion

The series

In the first of our web applications security blog series we discussed a secure process and why it’s crucial for any organisation. Before hardening servers or web applications, a robust process must be in place to govern business as usual within the organisation.

In this article, we move our focus to web applications security, specifically passive security. The blog provides a detailed look at security (so it’s pretty long!) but if you just want to look at a high-level summary, here it is...

Summary

Do-it-yourself passive security is an ongoing effort to keep every part of the system up to date. It requires a well-managed hosting infrastructure and good development practices, including a solid release management process and continuous integration.

Ensure every part of your system is up to date, with a reliable process of automated security updates application. A good log monitoring system is a must-have to ensure security and performance issues reporting. A trusted managed hosting provider, such as Acquia or amazee.io, may be a good alternative to a DIY in-house managed solution. A CMS updates and security patching support agreement with a web vendor (such as Salsa Digital) helps to offload complicated CMS patching processes and automated testing off your team’s shoulders.

Introduction to passive security

Passive security is security within the core of the system that doesn’t require any feedback or action if an attack is detected. Passive security also means a system that’s immune to exploitation or other types of attacks by ensuring it’s always up to date. As opposed to active security, malicious traffic isn’t blocked or even detected at all. Your application could be under attack, and no (or very limited) attack detection and reporting are in place.

In this article, we’ll be reviewing passive security in web-based applications. However, the same principles apply to many other areas of software security. To simplify the examples, we’ll use the Drupal content management system (CMS) as our web application.

The five steps outlined in detail below are:

1. On-time security patching

Every software has vulnerabilities. Vulnerabilities always existed and will continue to exist for the foreseeable future. They often remain unknown, hidden until exploited by malicious users. Unknown vulnerabilities represent risks of data breaches. They’re challenging to discover, monitor and report.

Security patching (or more specifically on-time security patching) is the primary way to keep your web application immune to attacks or the exploitation of vulnerabilities. However, for high levels of security, additional steps should be taken to ensure security at every level.

When it comes to security patching, the primary security risk is during the window of time between a security update being released and when it’s applied to your production environment. We’ll discuss this problem later in this blog. You can read more about the importance of CMS patching at our page on open source security patching and updates.

2. Keep your system up to date

It’s important you keep every part of your system up to date. That includes LAMP and kernel security and system reboots.

Keeping LAMP up to date

These days, a web stack hosts most of the web-based software. Whether this is LAMP, LEMP, WAMP or another X-AMP, it represents a stack of web server, some kind of persistent data storage (such as a database system), a file storage, a processing engine (PHP, Python, Node.js, Ruby and many more) and an environment where this X-AMP stack runs (this might be a Linux or any other OS).

Before even thinking about securing a web application, it’s vital to keep the LAMP stack up to date. As soon as your server is provisioned and online, it becomes a possible target for a variety of attacks.

Whether you run Linux (or another OS) as your production stack, daily security updates are recommended. In Linux, depending on your distribution and the package manager used (we cover the most popular Debian and RedHat based distributions), there might be a tool to automate this process reliably and safely.

Linux: yum-cron and unattended-upgrades

RedHat and CentOS come with “yum-cron” package, where the Debian-based distributions could leverage the “unattended-upgrades” package.

yum-cron

vim /etc/yum/yum-cron.conf

You can update the configuration as below, or customise to your requirements.

update_cmd = security

download_updates = yes

apply_updates = yes

You can also join the discussion on yum-cron automated updates in production systems at .

Unattended-upgrades

Install and configure unattended upgrades according to the documentation at .

Both packages offer a similar set of features and could be configured to download and apply security patches daily (or hourly). The CentOS may provide additional granularity when only updating security-related packages. The safest way to implement the package updates and avoid web application outage is to apply the security updates. In my 20+ years doing systems administration, the likelihood of a disruption caused by such an update exists but is negligible. Modern package managers do an excellent job to keep your stack functional after the upgrade.

Kernel security and system reboots

Kernel security is critical to maintaining passive security of LAMP stack. In the next blog in the series, when we talk about active security, a way to prevent rootkits loading will be outlined. Without it, ensuring you’re running the latest kernel version is imperative.

You can check out the stats behind Linux Kernel vulnerabilities over the years at the corresponding CVE Details . The stats outline the significant increase in vulnerabilities discovered in Linux kernel. You can also subscribe to RSS feeds and get the latest updates regarding Linux kernel vulnerabilities and announcements.

When running daily system updates with yum-cron, or unattended upgrades, your kernel packages are updated but not applied. To load the updated kernel packages, a system reboot is required.

To minimise unplanned downtime and maintain the highest levels of security, a rebootless kernel service is required. It allows you to automate kernel patch application without a system reboot.

Canonical began offering Livepatch starting from Ubuntu 16.06 and RedHat offers its (free but still in beta). Alternatively, Kernel is available for major Linux distributions.

3. Log monitoring and emails

With no Host Intrusion Detection System (HIDS) in place, it’s impossible to identify and receive alerts about possible attacks or problems with your LAMP stack. (We’ll cover HIDS in the next article about active security in web applications.) However, these steps will help you monitor your system:

Make sure you have email sending functional. In Linux OS, configuring a Postfix mail server isn’t too hard. We use the ansible-role-postfix Ansible playbook by Jeff , which allows automating all installation and configuration steps with required customisations.

Configure the root user’s email forwarder by adding the email address for server monitoring into /root/.forward

A log monitoring system is another vital part of web applications security. You can get real-time analytics and insights into your system security events. Use a good log monitoring system to automate the process of problem/attack detection, with escalation level alerting via email or SMS. Log monitoring and alerting could be achieved with simple tools like or HIDS (a more advanced tool that we use a lot with our web applications).

4. Moving security into LAMP stack or above

LAMP stack is your second most vulnerable place after the web application itself. Ensure it is updated daily. To avoid or minimise downtime, using yum-cron or unattended-upgrades to download and install security-related software updates is recommended.

Drupal, WordPress and many other CMSs offer modules that claim to secure your CMS. While this sounds very attractive, it but may be an illusion of security. These modules offer CMS-integrated active security techniques, however, they run on the PHP engine itself and follow CMS processing workflow. PHP engine, as the slowest part of LAMP stack yet a vulnerable one, should be avoided to host the security layer. CMS processing workflow may also represent efficiency bottleneck when processing incoming traffic. CMS security modules significantly increase the performance impact and we generally don’t recommend using those. Such modules also don’t offer any false positive management interfaces and may create issues in using your web application. A much better alternative is to move the (active) security into the web server (LUA engine and mod_security for example), offering significantly faster (1000x times faster) traffic processing. We will cover more of that in our next blog on active security methods.

You should also look at your web server, SSL, PHP, ClamAV, MySQL and Memcached.

Web server: Apache or Nginx

Apache, as the most popular free web server powering most of the internet, allows customisation of its configuration via the .htaccess file. The .htaccess file brings flexibility to customising web server rules and using this file from your favourite web application is an excellent way to keep your web application safe. An overloaded .htaccess file may introduce a performance penalty, so try keeping the file lightweight and avoid complex rewrite rules. A writable .htaccess file represents a security risk, so make sure it’s mounted in a read-only filesystem (the rest of your web application files should also be treated in this way) or at least has no write permission set.

Drupal 8 and 7 provide the system report page, outlining issues with securing your public, private or temporary files directories. By placing a special .htaccess file into these directories you prevent dangerous operations (such as PHP execution) in these directories. Refer to for more information.

Nginx doesn’t offer the flexibility of the .htaccess file like Apache does, but due to the more advanced performance of Nginx vs Apache, it’s the second most popular web server according to a W3Techs .

Nginx configuration should not allow the execution of web application files from writable locations (such as media file directories, cache directories, temporary or log directories). There are a few good, ready-to-use examples of Nginx configuration for the variety of web applications, such as Drupal, WordPress and others.

Note about SSL

This blog post doesn’t cover implementation of SSL, but it is vital to have the up-to-date SSL binary (such as openssl), configuration of SSL ciphers and protocols. A good testing tool could help you ensure your LAMP stack is well configured. We use SSL Server Test by Qualys SSL to make sure no vulnerabilities exist in our sites. The goal is to at least rank A (or better, A+).

PHP

The below steps will help with PHP security.

Keeping PHP up to date is vital to web application security. Some additional steps should be considered for securing PHP. Check the PHP executable handling section in for disable_functions and disable_classes. If you use drush, to prevent drush warnings about the disable_functions, you can add the disable_functions= to your user’s ~/.drush/drush.ini file. This setting disables the limitations for drush, but keeps them enabled for the web server.

When configuring PHP for multi-threaded execution, you likely use PHP-FPM. With security in mind, separate multiple applications by implementing standalone PHP-FPM pools and use a unique user for each web pool.

PHP-FPM: Ensure the PHP user and group have no login shell available (in the /etc/password, all of your PHP or webserver users must have /sbin/nologin appended) and no write filesystem permissions inside the web root set. Restrict PHP-FPM only to listen on the local TCP socket, or a socket file.

listen = 127.0.0.1:9000PHP-FPM: Restrict connections to PHP-FPM from the local IP address only

listen.allowed_clients = 127.0.0.1PHP-FPM: Disable PHP version headers:

php_flag[expose_php] = offPHP-FPM: If log monitoring and alerting is in place, ensure the PHP access log is enabled: access.log = /var/log/fpm/php7.0-fpm-access.log

access.format = "%t "%m %r%Q%q" %s %{mili}dms %{kilo}Mkb %C%%"

catch_workers_output = yes

php_admin_flag[log_errors] = true

ClamAV

ClamAV is an open source antivirus and malware scanning application. Update ClamAV daily using the bundled cron job. Such a cron job is configured automatically if you install ClamAV using your OS package manager. Some third-party vendors may include a custom set of additional rules to improve malware detection in ClamAV and detect the most recent virus and malware signatures.

ClamAV could be run in two ways: 1) as a daemon, scanning all files on access; or 2) in application mode, scanning files on demand.

I have already compared these two ways of running ClamAV in my blog post Drupal ClamAV module versus Maldet to eliminate malware in uploaded .

MySQL

Keep MySQL (or any other database server) listening on the internal network interface. This network interface could be the “localhost” or a private IP address that is available inside the private (internal) network. This is the preferred way.

If running a database server on a public-facing network interface is necessary, make sure that a firewall is blocking any outside connections (except the whitelisted IP addresses) to your database server TCP socket.

When running an external database, or a cluster of databases (such as the Galera Cluster by MariaDB), ensure that only the internal, private network interfaces are in use. When running your database clusters in cross-region implementations, and to eliminate the risks of the man-in-the-middle attack, ensure SSL traffic encryption is in place. You can find out more on the Galera Cluster SSL Settings .

Memcached

Memcached was recently involved in a massive DoS . The only vulnerability that made this attack possible was the lack of default configuration to run the memcached service on an internal network interface. Memcached should reject any public connection attempts unless required. In the latter case, authentication might be necessary.

Based on your distribution, ensure your memcached.conf file has this configuration line:

For CentOS: /etc/sysconfig/memcached

For Debian: /etc/default/memcached

OPTIONS="-l 127.0.0.1"

External memcached instances (such as AWS ElastiCache for memcached) must have firewall policies in place to eliminate any unauthorised access.

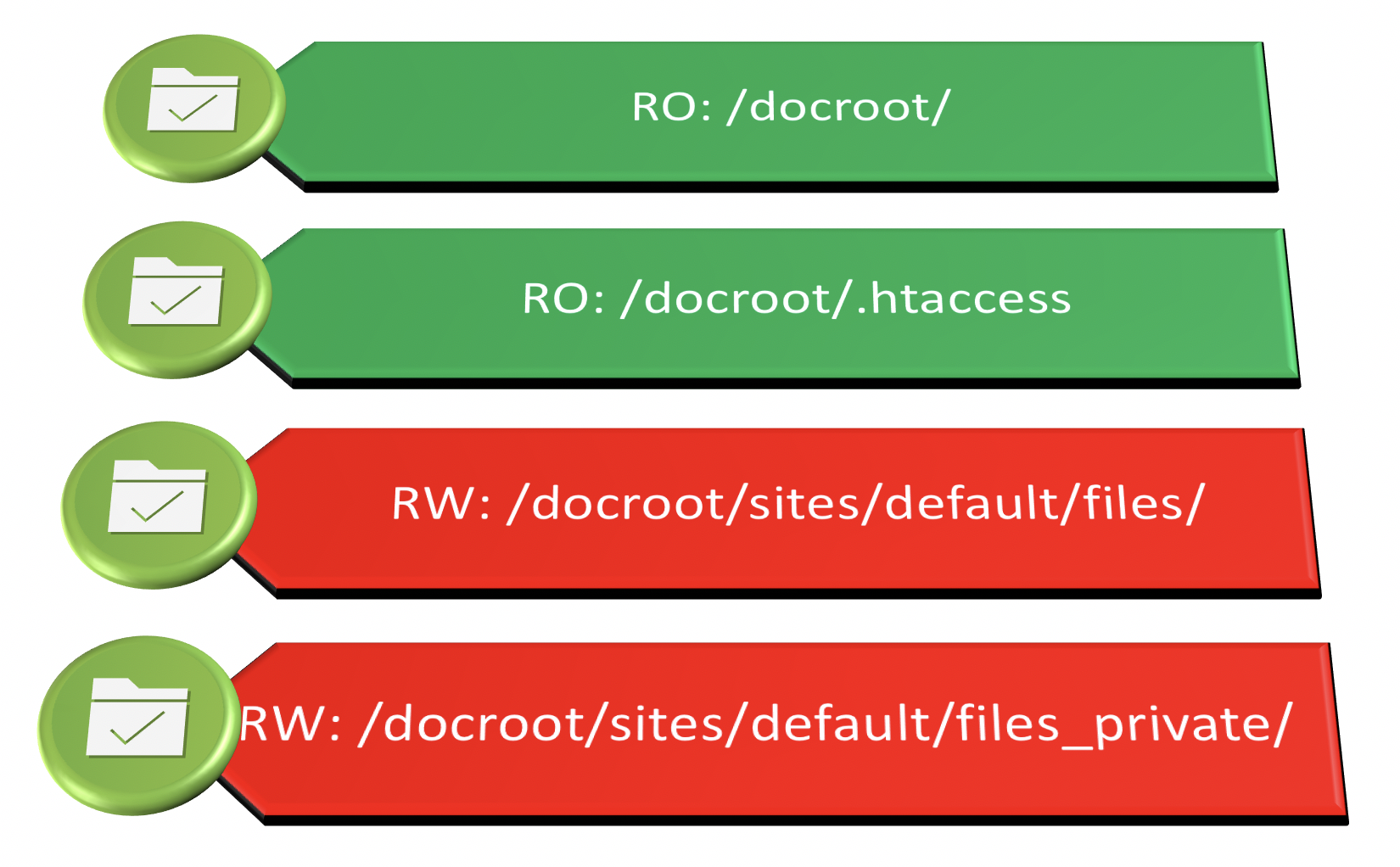

5. Read-only file system and CI

A must-have and a big step forward in passive security is to make the internet-facing web application running from a read-only file system. Mounting the folder or an external volume in the read-only mode and with additional mounting options helps prevent the execution of files and eliminates several attack classes altogether.

In the /etc/fstab, your volume should have additional options like this example. We assume the volume with your web application files is available as /dev/xvdb1 and uses an excellent XFS filesystem - customise this to your system if necessary:

/dev/xvdb1 - webroot of your web application, mounted as read-only filesystem

/dev/xvdb2 - public files directory and is a writable filesystem

/dev/xvdb1 /var/www/html/docroot xfs defaults,ro,nosuid,nodev,nofail 1 2

The ro, nosuid are to improve the security of your web app. Any writable locations must be mounted with rw option separately. For example, in Drupal, the sites/default/files directory should be writable by PHP:

/dev/xvdb2 /var/www/html/docroot/sites/default/files xfs defaults,nosuid,nodev,nofail 1 2

If the read-only filesystem is not possible, removing writable permissions from web-facing files and folders (docroot and below) could help increase the security of your web application. In this case, your engine (PHP) should disallow specific functions (the disable_functions configuration directive). In addition, direct access to individual files and directories by your web server should be routed through the index.php, which decreases the performance. These are the directories for storing uploaded media files, cache directories, log directories and temporary directories.

The read-only filesystem requires an external “runner” (or a continuous integration system, such as Jenkins) to execute the necessary mounting and re-mounting operations during the provisioning of the updated web application files. An excellent way to offer the read-only filesystem is to have an external volume that is writable by your CI system but is mounted into your web root as a read-only volume.

Filesystem permissions modules, such as the one for should be avoided. Hackers have to exploit vulnerabilities to just get the ability to modify filesystem permissions, where as that module brings a convenient API to modify your server’s file permissions via PHP.

Manual management — a burden with passive security

To summarise, passive security comes down to the following maintenance items:

Keeping the hosting platform up to date

Keeping the web application up to date

Mounting the web application files in a read-only filesystem and maintaining limited access to writable volumes

Maintaining a good log monitoring

Maintaining alerting system

For large organisations that manage their hosting environments themselves, keeping the systems up to date and configured in the right way may be a complex and complicated task. Using tools like Ansible could facilitate maintaining platform configuration and applying changes across multiple systems. Moving these tasks to a well-established managed hosting vendor may reduce this burden and offload team resources for other useful tasks.

A small organisation could benefit from moving their web application to a managed hosting vendor, such as Acquia, Platform.sh or amazee.io.

Having active security in place could help minimising the maintenance burden and automate most of the web application security. Active security doesn’t provide web application security patching but offers a way to patch the vulnerabilities via a set of virtual rules. By scanning any incoming or outgoing traffic, an active security system could block any malicious traffic before it reaches even a vulnerable web application.

Overview of victim profiling — how I would do it

Let’s consider a simple example that outlines why having only passive security in place is not enough. Even though passive security makes your web application immune to web attacks, it represents risks associated with the delays between when the security patch is released and when it's applied. Any unknown vulnerabilities could also be exploitable for years.

Why passive security alone may not be enough

Let’s consider this scenario, which is a widespread one.

Say we have a hypothetical client Waterco who runs their Drupal 8 website in Acquia Cloud. The website is maintained by an internal development team. Waterco dev team has only one developer at the moment, who provides security patching and rare website enhancements.

On the day D there is a critical security release announced, however, the Waterco developer is on annual leave. This patching delay exposes the Waterco website to a possible data breach and subsequent brand damage. Once the security patch is released, a vulnerability exploit could be developed within an hour or less. Whether the site is defaced, hacked or exploited in any other way, such an event represents brand damage to Waterco.

Website exploitation takes time, but knowing the victim website well, a malicious party could attempt the exploit without delay, while the Waterco’s developer is on annual leave. Malicious users may be exploiting a site for some time, slowly building a victim profile such as:

Application type and version

Installed modules, features and their versions

Any system data that is exposed to the frontend (PHP version, web server type and version, operating system and so on)

Type of security in place (or lack of it)

What previous exploitation attempts were successful or partially successful

If I were “them”, I would monitor the known CMS type (Drupal 8: ) and any modules ( ) for security announcements. As soon as an announcement is published and a patch is released to the public, I would attempt to reverse engineer an exploit and apply it on all relevant sites in my “profile”.

Patch window risks

Whether we consider operating system-level updates, LAMP stack or web application, there is always a time delay between the release of the updated version of a software package and application. With LAMP stack, we explored yum-cron and unattended-upgrades packages that could do the hard work in a timely way. When it comes to the web application, automated upgrades may not be possible. Let’s have a look at how drupal.org approaches the security updates announcements.

Drupal.org announces security updates every Wednesday at 10am. In the case of a critical security release, an in-advance notification could be published (like this one ).

Despite well-handled and in-advance announcements, it takes time to apply and test the update. The time difference (and due to different time zones) also affects the timeframe of the patch application. In some cases, there might be other delays in updating your site to the “immune” version.

Whether it is just one hour, one day or one week, these delays represent risks for a vulnerable website to be breached. To minimise risks during the patch window you should be ready to patch your systems at the announced date/time. Patching risk could be reduced by having automated tests in place. Either way, it’s a good idea to patch ASAP and deal with possible breakages later. The Australian Signals Directorate recommends patching ‘extreme risk’ vulnerabilities within 48 hours as part of its Essential . You may also like to read our blog on the Essential Eight.

Conclusion

As we explored in this article, passive security requires a lot of time, experience and team availability during critical security patch releases. The good news is that there are ways to stop malicious traffic from exploiting your website, but this is the topic for our next article ‘Active security in web applications’. Stay tuned.