A presentation

We recently presented to one of Australia’s leading universities (an existing client), suggesting containerisation as a key strategy for their new platform. Part of our team for the project was Des from CitoPro. We’ve worked with Des on other projects and he co-presented with us on containerisation for the university. This blog uses some key messages from Des’s presentation (and his diagrams!) and also gives a general overview of containerisation and some of the tools used to execute this strategy.

What are containers?

Containers came onto the scene in 2013, with the launch of open source project . Containers are named after the standardised containers used in the shipping industry because the concept is similar — in the software world a container is a uniform structure where any application can be stored, transported and run.

Containers serve a similar function to virtual machines. They are both virtualisation methods for application deployment, however deploying virtual machines (VMs) is hardware virtualisation whereas containerisation shares the host’s operating system.

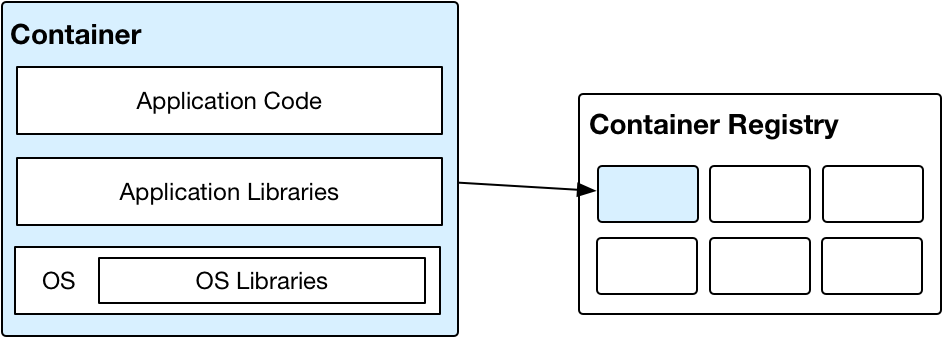

A container is a way to package the application and its dependencies so it then becomes very easy to install the application.

How containers work

A container surrounds an application in its own operating environment, and can be placed on any host machine. The application runs in the userspace of the operating system, an area of the computer memory that’s kept completely separate from the OS kernel. It does not need a hypervisor to run. (This compares to the ‘old method’ of using VMs, where an application would need a guest operating system and therefore an underlying hypervisor.) This is why containers are faster than VMs — instructions aren’t going via the guest OS and a hypervisor to reach the CPU. The containers are also smaller, and can be started in seconds (instead of the VMs’ minutes).

Developers decide what’s in the container, including the dependencies. However, containers give you a better way to package those things.

A container image

A container is a running image. The most common tool used to create containers is open source tool Docker. So, a Docker image is a container image created with Docker.

To update an application, developers change the code in the container image, then redeploy the image to run on the host OS.

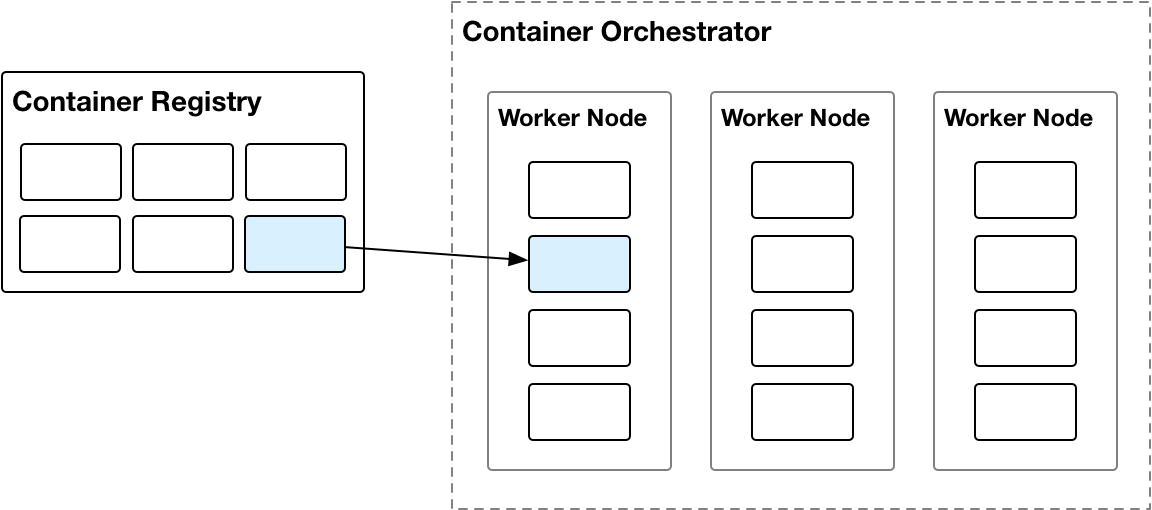

Container images are pushed to a container registry.

From there, the container lifecycle is managed by the container orchestrator.

The container orchestrator provides specific services to the containers, such as service discovery, self-healing, networking, storage, etc.

Key benefits of containerisation

There are many benefits to containerisation, which is why it’s becoming such a widely used tool. Some of the containerisation benefits include:

More portable (than VMs) — an application container can run on any system and in any cloud without requiring code changes as long as Docker is installed on the systems. Developers also don’t need to manage guest OS variables or library dependencies.

Faster and more efficient (than VMs) — application containers use less resources than VMs, because you don't need a full OS to underpin each application. Efficiencies are gained across memory, CPU and storage.

Greater flexibility — for example, developers can create a container that holds a new library when working on variations of the standard image.

Reproducibility — you can deploy the image as many times as you want and it’s the same image, so you get the same end result.

Simple packaging format — container images can be created with a single command and pushed to a container registry, there is no additional need for packing tools like make, RPM, etc.

Rapid and consistent deployment of workloads — containers can be started within seconds and allow much faster scaling capabilities than with VMs. A container orchestrator is constantly monitoring the resource usage of the containers and will automatically start additional containers to handle traffic spikes.

Robust runtime environment for scaling and self-healing — can also support more application containers on the same infrastructure when compared to VMs.

Standard management interface — because Docker is open source and the most popular container format that’s in use right now, many tools support it. For example, a Docker container can be read by Kubernetes, Docker Swarm, Amazon ECS, etc.

Can avoid vendor lock-in — via open source offerings Docker and Kubernetes.

Kubernetes

Kubernetes is a tool that enables automatic deployment, scaling and management of application containers. Like Docker, Kubernetes is open source, although it was originally built by Google. Kubernetes groups containers that make up an application into logical units for easy management and discovery.

Kubernetes lists its key features as:

Automatic binpacking — Automatically places containers based on their resource requirements and other constraints, while not sacrificing availability.

Horizontal scaling —Scale your application up and down with a simple command.

Automated rollouts and rollbacks —Changes are progressively rolled out while monitoring application health. If something goes wrong, Kubernetes rolls back the change.

Storage orchestration — Works with different storage systems, such as GCP and AWS, or network storage systems such as NFS and Gluster.

Service discovery and load balancing — Kubernetes gives containers their own IP addresses and a single DNS name for a set of containers, and can load-balance across them.

Secret and configuration management — Deploy and update secrets and application configuration without rebuilding the image or exposing secrets in the stack configuration.

Batch execution — Kubernetes can manage batch and CI workloads, replacing containers that fail, if required.

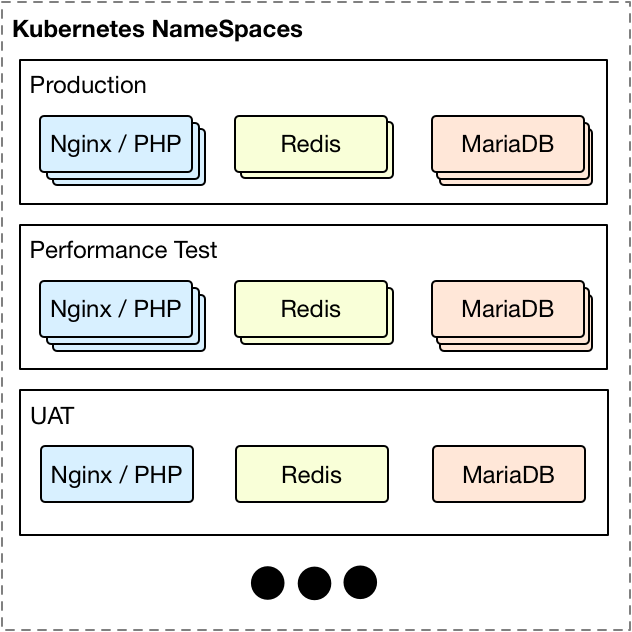

Kubernetes uses namespaces to map containers into environments.

A namespace contains one or more pods (containers) and/or other Kubernetes resource types.

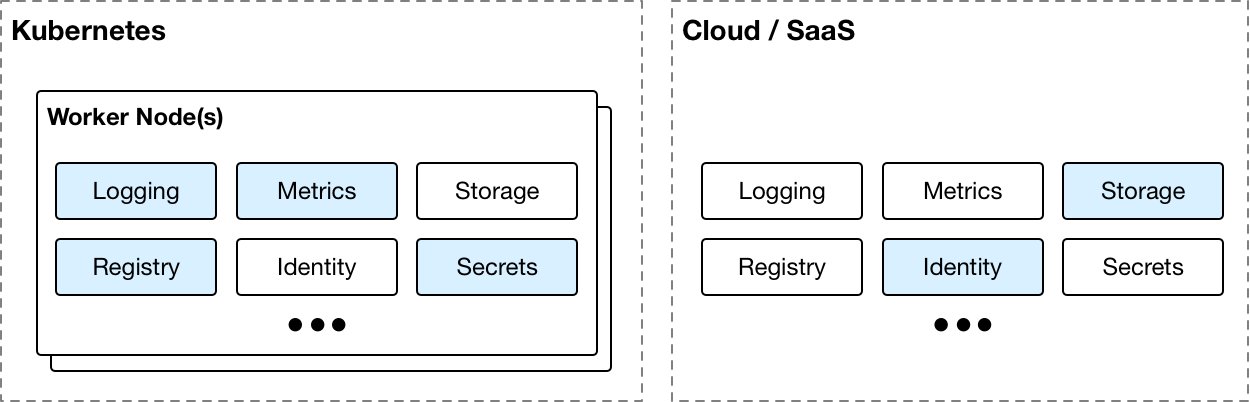

Cluster services

Services that support the applications can be deployed into the cluster, or a cloud provider/SaaS solution can be used (or a combination of both). If all support services are running within the cluster then it becomes easy to migrate between different cloud providers.

Kubernetes abstracts some cloud-specific services into normalised forms that can be used anywhere, for example load balancer and storage.

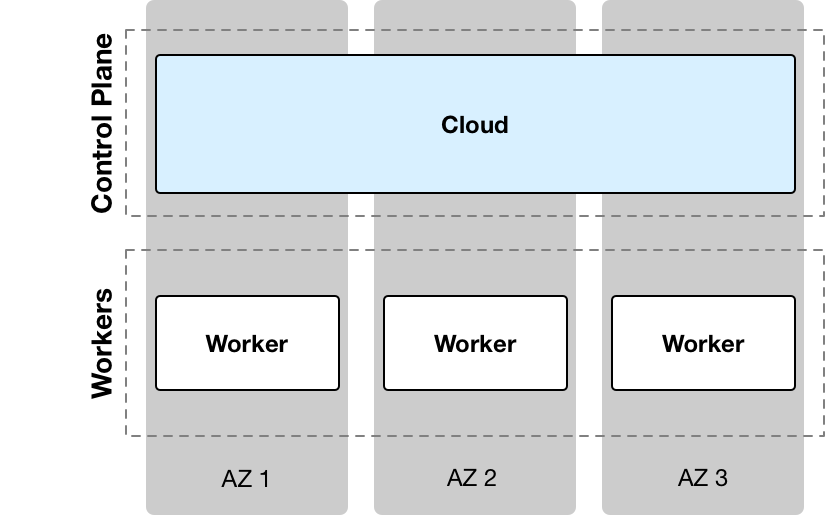

Kubernetes as a service (KaaS)

Most clouds now offer managed Kubernetes aka Kubernetes as a service. In this model, the cloud takes care of the control plane and provides SLAs around it, and the user pays for the number of worker nodes needed.

Federated clusters

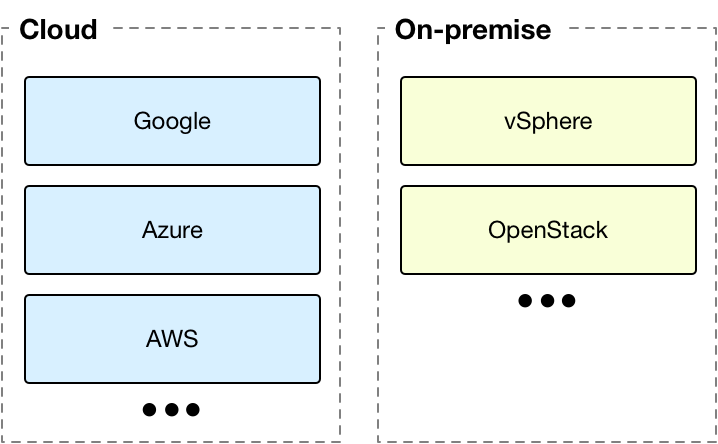

Multiple clusters can be used to provide additional redundancy and localised placement of workloads for a given region. The clusters can be any combination of cloud/on-premise.

Docker and Kubernetes

Containerisation started with Docker in 2013 and in 2017 Docker incorporated Kubernetes into the Docker platform. Now, both systems integrate seamlessly to provide the benefits of containerisation with the management capabilities of Kubernetes. And all open source.

Containers in action — development and staging environments

Containers are a great way to separate the development and staging environments, because software within the container is isolated from the rest of the system. This helps to reduce conflicts if you’ve got teams working on different items in development and staging, but on the same infrastructure.

Get in touch

If you’d like to know more about containers, feel free to contact us using the form below or call us on 1300 727 952.