On this page:

- What is test automation?

- Who performs test automation?

- How to setup a team for automation testing

- How to architect a test automation framework

- Cultivating an organisational culture of test automation

- Understanding the test automation pyramid and its impact

- Unit tests

- Service level tests/acceptance tests

- End-to-end tests

- UI tests

- Some tips and tricks for test frameworks

- Choosing the right tooling for test automation

- Strategies for an efficient test automation framework

- Scaling/optimising your test automation framework

- Automated testing at Salsa

- Automated testing for Drupal projects

- Automated testing for Python projects

What is test automation?

Testing is an intrinsic part of every software project. A tester needs to validate functionality against the customer’s needs and make sure all the requirements are working properly. Testing this process manually is called manual testing. As the project evolves, new requirements and functionality come in, and testers need to make sure functionality that’s already completed is still working correctly. This is called regression testing. Manually running regression tests can be time-consuming so automating those tests is very helpful and reduces manual effort. This is called automation testing.

Many companies begin this process by setting up a proof of concept. When crafting automation frameworks it’s very important to have a structured strategy to ensure a return on investment (ROI) for both the organisation and the end client.

The paragraphs below cover the steps required:

Cultivate an organisational culture of test automation

Advocate test automation initiatives across the organisation

Brainstorm to choose the right tool for test automation

Ensure developers help/support testers in test automation

Think about how the test automation framework can be used for projects across the organisation

Optimise and scale your automation project for your business needs

Understand how to bring the best ROI and help the organisation grow

First we need to ask the question: Why should we automate our testing process? What are we trying to accomplish? Every new process or improvement needs proper planning before execution. Questions to consider include:

Are we trying to reduce our regression testing time? If so, automating regression tests is a good idea. Manual testing teams can focus on testing new functionality and run automated tests for regression.

Are we planning to run tests for every deployment in the CI/CD pipeline? Service or product companies that ship multiple features during the day cannot retest every new feature again and again before hitting production. They need these tests plugged into a CI/CD pipeline to prevent any defects coming across to environments on which deployment is done.

If we’re able to answer these questions honestly, we’ll all have a common goal for test automation. The common goal or the problem we’re trying to solve needs to be communicated across the organisation for better ROI.

Who performs test automation?

Identifying the roles and responsibilities for the team that performs automation testing is very important. Team members supporting automation testing are mainly responsible for:

Setting up the test framework

Writing automation test scripts

Updating existing test scripts and adding new ones for the latest functionality

Running reports whenever there are deployments done, and monitoring the results

Reporting the results to stakeholders

How to setup a team for automation testing

Option 1: The development team can write automation test scripts and manual testers can monitor the report. However, this approach can mean developers don’t get enough time to ship new features in the sprint, which in turn increases the number of development tasks in the next sprints.

Option 2: Manual testers can be trained and write automation scripts. The downside of this option is that they will not get time to test existing feature stories in the sprint. Hence, this option also doesn't seem practical.

Option 3: We can have dedicated testers who write automation scripts and monitor them. This option reduces the impact on developers and existing manual testers. This option needs to be built in during project planning.

Automation test projects can be considered as full projects, so organisations need to think and invest accordingly, keeping in mind the returns generated.

How to architect a test automation framework

We now understand why we need test automation and who will write the automated testing scripts, but we need to answer a few more questions for the best result.

Do we have a full list of tests that need to be automated?

Before answering this question, we need to make sure testers have a full understanding of the product. They need to identify which manual tests need to be automated and which ones can be left. Maybe they can choose critical functionality tests only or they can focus on user interface (UI) tests like font colour, dimensions of elements, etc. They can discuss the priority of tests to be automated with their engagement managers or product owners. In projects with tight timelines, writing test scripts for critical functionality makes more sense.

Which tooling do we need to write tests and generate reports?

Thorough planning and discussion with stakeholders needs to be done to choose the best tool to automate test scripts. Licensed versus open source tools need to be discussed with product owners. Teams should be aware of the pros and cons of each tool they plan to use for automation. Also, the reporting library they’ll use for generating test reports needs to be set up wisely.

Where will tests be kept?

Test automation architects need to plan where they will keep their tests. Do they want a separate folder out of the project for their tests or do they want tests to be inside their project repo? If we keep tests inside the project, we can communicate with project code easily, for example getting a list of users from the backend. Some test frameworks have base and helper classes, which can also be extended for another project. This saves rework and helps to keep the focus on writing critical test scripts.

How are we going to run our test scripts?

Are we running test scripts on a local machine and sharing reports via email or are we having a jenkins instance on cloud that runs our tests and sends reports periodically? We need to plan the costs and resources needed for this approach.

Cultivating an organisational culture of test automation

An organisation or team has developers, product owners, testers, etc. Every member in the team should understand the value of test automation. In agile projects, teams are cross-functional, which means every member of the team should contribute towards the initiative.

Every member of the team should understand the common goal for test automation and contribute towards it for the success of the project.

Role of product owners: Test architects and product owners should visualise the tests that need to be automated together. Product owners should define the business priority of which tests need to be automated. Test architects can give them feedback and have a brainstorming session on this. This is critical for the success of test automation projects.

Role of developers: Developers can help by writing unit tests that minimise the leakage of bugs when developers push new functionality to the production environment -- they do so by running these tests along with functional tests, if needed. They’re confident that existing features don't have any impact.

Role of testers: Testers write and update the test automation scripts and also monitor the results. They also discuss the feedback on test cases provided by product owners to prioritise some critical cases first for automation. This continuous feedback system enables better test coverage for the project and contributes towards the overall success of the project.

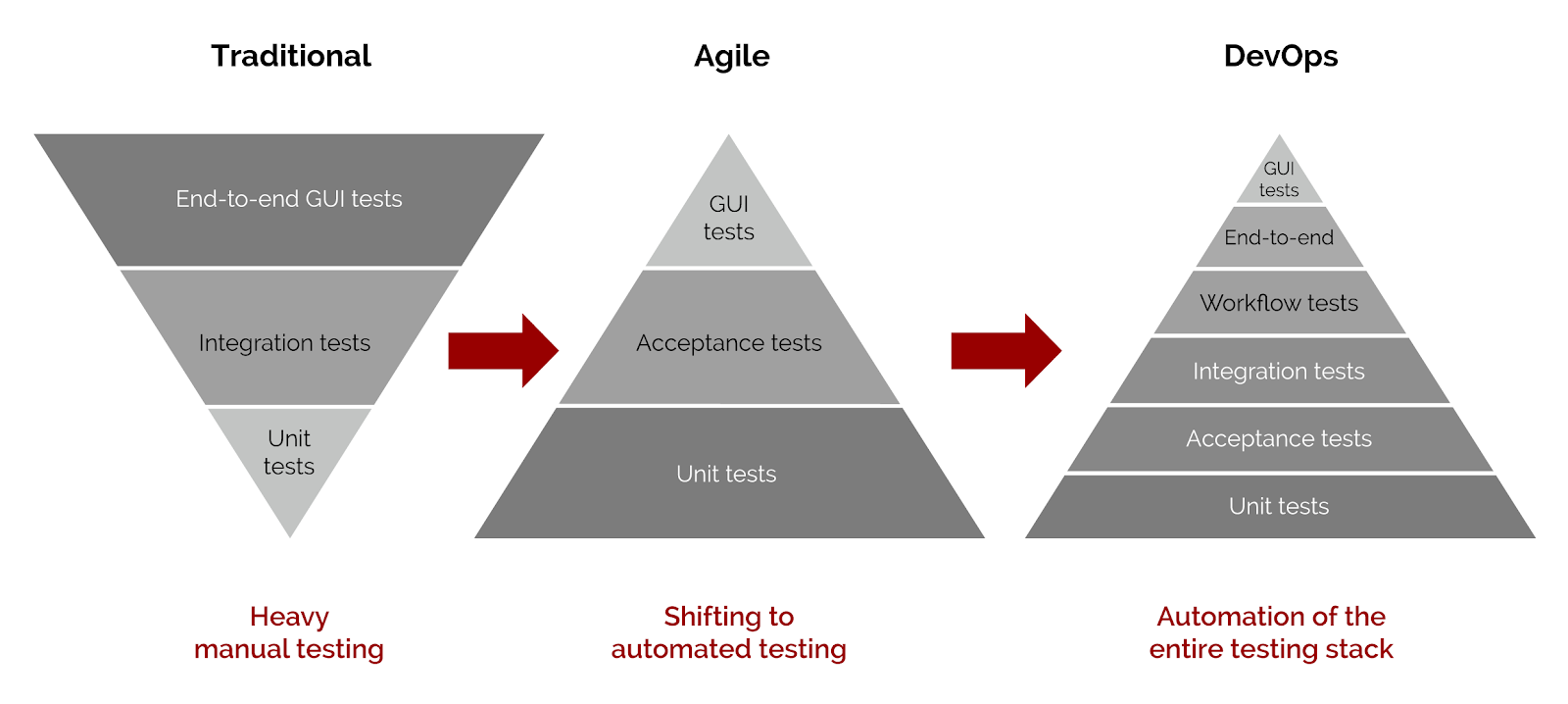

We’ve looked at why, who and how to strategise test automation and cultivate a test automation culture. Next, it’s important to consider the test automation pyramid, comparing traditional, agile and DevOps.

Understanding the test automation pyramid and its impact

Unit tests

If we write tests at a very granular level, it prevents defects when integrating components together. Writing tests at code level is called unit testing. This is mainly done by developers. They write tests for verifying functions, etc. A higher unit test coverage helps the success of projects in the long run. Ideally we should have unit tests written for all important functionality.

Unit tests form the base of the test pyramid. Traditional models used to have less unit test coverage, thereby increasing test automation costs later.

Service level tests/acceptance tests

Writing tests for the API layer and backend functionality also helps project quality. If automated tests are passing at this level, then testers don’t need to write tests for the UI level. We should have good coverage here also.

End-to-end tests

End-to-end tests should be less in number and should cover critical user journeys only, for example creating a blog content type from the backend and verifying that all its components appear on the frontend.

UI tests

Testers should make sure to write minimal cases for UI because UI tests are lengthy and take time to write. For example, if I need to place an order on a website, I need to select the product first, then add that to the cart, then go to the checkout. This process seems lengthy. If such a kind of testing is done at the service level, for example hitting the checkout API by passing the product ID and checking the response, it would save time.

Some tips and tricks for test frameworks

UI locator strategy: Having good locators on the document object model (DOM) helps the tester to write unique locators for each element in their test automation framework. If every locator has a different ID or CSS class, that will help the tester write an efficient Xpath.

Seams: Seams are how we use the system’s implementation to help build more streamlined and reliable automated tests. Seams can be APIs, mocks, stubs, or faked dependencies of your real system. Accessing the URL parameter directly is also a seam.

For example, we have a test to check a particular product. Instead of writing tests for searching the product we can directly hit the product URL in our tests. This helps speed up our test automation process.

Choosing the right tooling for test automation

We’ve covered various aspects around strategy for the automation test process. Tool selection is also very important when creating a test strategy.

For unit tests, code needs to be written inside the project repository along with modules. This will mostly be done in the programming language used for creating that module.

Service level tests are mostly written by testers. If we have dedicated automation testers they can use code-based tools like rest-assured, which will give them more flexibility in writing tests. If we have testers with less programming knowledge we need to keep in mind that they’ll need to invest time in learning the language and framework as well. Tools like and are helpful on that front.

For UI-level tests we have both coded and non-coded frameworks. The former is more extensible but has a learning curve for newbie testers. Non-coded frameworks are easy to use but cannot be extended or modified much.

Strategies for an efficient test automation framework

When designing a test automation framework we must keep the following in mind:

Tests should be independent of each other: We should avoid making one test dependent on another. Suppose we have 100 tests and they depend on each other. If one of them fails then our whole set of tests will fail. We should write tests in such a way that they are independent of each other. We can run independent cases in parallel, thereby decreasing the execution time.

Test data should be unique: One test should not modify the test data for another test. We might get test failures if test data is not kept separate. Also, variable naming should be done correctly for test data.

Tests should be self cleaning: Mostly tests create data or modify existing data. We should create scripts that clean such test data. This is called tear-down or cleaning up the changes done. For example a new blog was created by your test automation framework, it should be deleted after successful validation.

Scaling/optimising your test automation framework

A test automation framework should be capable enough to run across multiple environments, devices and browsers.

Multiple environments: No project runs on a single environment. We usually have development, test and production environments. We cannot write separate tests for each environment. Also, each environment will have different configurations like URL, login details, etc. To tackle this we must use an environment property file that has all these details. This file can be used easily for this setup.

Cross-browsers: For cross-browser testing we can have local setup but again that needs a system with a good configuration. We can use cloud-testing tools like or Sauce that provide an API to run tests in multiple browsers and provide test results accordingly.

Devices: Our tests should be able to run on multiple devices. We can either use cloud solutions or frameworks like that run tests for native applications like iOS and Android.

The success of a test automation project can be measured by how it reduced the regression testing time, how constantly teams received feedback, and how many bugs were found during test execution. Overall, if we follow a detailed strategy for setting up test automation, it will be a success for the whole organisation.

Automated testing at Salsa

Below are some examples of how we’ve implemented automated testing at Salsa across both projects and projects.

Automated testing for Drupal projects

Developers writing unit and behavioural tests to test their code. No test automation engineer was involved.

Why were developers writing tests themselves? For web applications, not everything can be tested with unit tests, therefore developers need to add some functional or even integration tests to make sure that newly introduced changes work. But this also builds up a regression suite, which delivers more flexibility for changes in the future, with significantly less risk of introducing defects into the existing functionality.

What are the tools? For Drupal projects, which is a PHP language, is used for unit tests and is used for behavioural tests.

Why we’re using behaviour-driven development (BDD): As in any digital agency, projects have their time and budgeting constraints. At Salsa, we’re always finding the balance between costs and test coverage. BDD approaches allow us to quickly write test scripts using a centralised library of reusable test steps that are used to cover critical user journeys. We don’t have 100% coverage of the features we develop, but we have sufficient coverage for the functionality that is critical for our client’s business.

Salsa projects using this approach include:

Single Digital Presence

Victorian Department of Health and Human Services

Victorian Agency for Health Information

Automated testing for Python projects

Everything is the same as for PHP, except that we’re using different Python frameworks for unit and BDD testing.

Tools

Projects

Data QLD’s CKAN data portal (several extensions)